This post discusses some options for accessing PostgreSQL databases from Go. I'll only be covering low-level packages that provide access to the underlying database; this post is not about ORMs, which were covered earlier in this blog. The full source code accompanying this post is on GitHub.

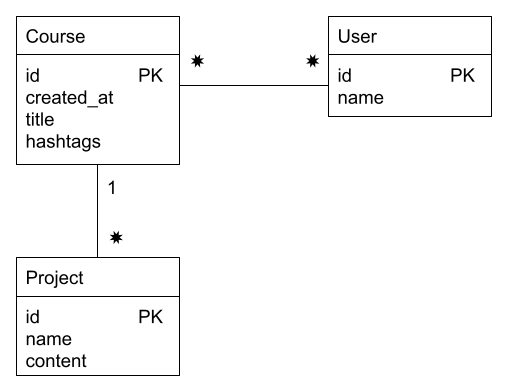

We're going to be using a simple data model that could serve as a basis for an online course system (like Coursera):

There is a many-to-many relationship between courses and users (a user can take any number of courses, and each course has multiple users signed up), and a one-to-many relationship between courses and projects (a course has multiple projects, but a project belongs to a single course).

The SQL to create these tables is:

create table if not exists courses (

id bigserial primary key,

created_at timestamp(0) with time zone not null default now(),

title text not null,

hashtags text[]

);

create table if not exists projects (

id bigserial primary key,

name text not null,

content text not null,

course_id bigint not null references courses (id) on delete cascade

);

create table if not exists users (

id bigserial primary key,

name text not null

);

create table if not exists course_user (

course_id bigint not null references courses (id) on delete cascade,

user_id bigint not null references users (id) on delete cascade,

constraint course_user_key primary key (course_id, user_id)

);

Note that the hashtags column is of the PostgreSQL array type: hashtags text[]; this is on purpose, to demonstrate how custom PostgreSQL types are modeled in the various Go approaches presented here.

database/sql with the pq driver

Probably the most common way to access PostgreSQL databases in Go is using the standard library database/sql, along with pq as the database driver. The full code for this approach, applied to our sample database is available here; I'll present some relevant bits and pieces below:

import (

"database/sql"

"fmt"

"log"

"os"

_ "github.com/lib/pq"

)

// Check is a helper that terminates the program with err.Error() logged in

// case err is not nil.

func Check(err error) {

if err != nil {

log.Fatal(err)

}

}

func main() {

db, err := sql.Open("postgres", os.Getenv("MOOCDSN"))

Check(err)

defer db.Close()

// ... use db here

}

There's the usual blank import of the driver package, which registers itself with database/sql; thereafter, the "postgres" name can be used as a driver name to pass to sql.Open. The path to the database is passed in an env var; for example, it could be something like:

MOOCDSN=postgres://testuser:testpassword@localhost/testmooc

If the database was created with the name testmooc, with the user testuser having access to it.

Following this initialization, we can issue queries to the database via db. Before we look at sample queries, here's the data model translated to Go types:

type course struct {

Id int64

CreatedAt time.Time

Title string

Hashtags []string

}

type user struct {

Id int64

Name string

}

type project struct {

Id int64

Name string

Content string

}

Note that, unlike with ORMs, relationships between tables are not captured here. A course does not have a collection of projects; this is something we need to set up manually when querying the DB. Another thing to note is that Hashtags has the type []string which will be mapped to PostgreSQL's text[].

Here's a sample function wrapping an SQL query:

func dbAllCoursesForUser(db *sql.DB, userId int64) ([]course, error) {

rows, err := db.Query(`

select courses.id, courses.created_at, courses.title, courses.hashtags

from courses

inner join course_user on courses.id = course_user.course_id

where course_user.user_id = $1`, userId)

if err != nil {

return nil, err

}

defer rows.Close()

var courses []course

for rows.Next() {

var c course

err = rows.Scan(&c.Id, &c.CreatedAt, &c.Title, pq.Array(&c.Hashtags))

if err != nil {

return nil, err

}

courses = append(courses, c)

}

if err := rows.Err(); err != nil {

return nil, err

}

return courses, nil

}

Given a user ID, this function obtains all the courses the user is signed up for, by join-ing the courses table with the course_user linking table. database/sql requires reading the result of the query in a scanning loop, and manually placing the results into structs; it's not aware of any mapping between Go structs and SQL tables. PostgreSQL arrays are read by wrapping with a pq.Array type.

Here's a slightly more involved query, which joins three tables to obtain all the projects the user has to finish (there could be multiple projects per course, and a user could be signed up for multiple courses):

func dbAllProjectsForUser(db *sql.DB, userId int64) ([]project, error) {

rows, err := db.Query(`

select projects.id, projects.name, projects.content

from courses

inner join course_user on courses.id = course_user.course_id

inner join projects on courses.id = projects.course_id

where course_user.user_id = $1`, userId)

if err != nil {

return nil, err

}

defer rows.Close()

var projects []project

for rows.Next() {

var p project

err = rows.Scan(&p.Id, &p.Name, &p.Content)

if err != nil {

return nil, err

}

projects = append(projects, p)

}

if err := rows.Err(); err != nil {

return nil, err

}

return projects, nil

}

While the SQL is more complicated, the rest of the code is almost identical to the earlier function.

pgx

While pq has been around for a long time and has served the Go community well, it hasn't been very actively maintained recently. In fact, if you read all the way to the end of its README, you'll find this in the Status section:

This package is effectively in maintenance mode and is not actively developed. Small patches and features are only rarely reviewed and merged. We recommend using pgx which is actively maintained.

So what is pgx? It's a driver and toolkit for PostgreSQL:

pgx aims to be low-level, fast, and performant, while also enabling PostgreSQL-specific features that the standard database/sql package does not allow for.

The driver component of pgx can be used alongside the standard database/sql package.

The pgx package has two distinct modes of operation:

- It can serve as a standard driver for database/sql.

- It can serve as a direct interface to PostgreSQL, which isn't beholden to the standard API of database/sql, and thus can employ PostgreSQL-specific features and code paths.

To use option (1), we can reuse 99% of the previous sample (the database/sql interface is really very well standardized!). All we have to do is replace the driver import with:

_ "github.com/jackc/pgx/v4/stdlib"

And then change the sql.Open call to invoke the pgx driver:

db, err := sql.Open("pgx", os.Getenv("MOOCDSN"))

We don't have to update the rest of the code [1].

What about the direct interface? For this, we'll have to rejigger our code a bit, since the types are slightly different. The full code for this is available here; here are the salient changes:

ctx := context.Background()

conn, err := pgx.Connect(ctx, os.Getenv("MOOCDSN"))

Check(err)

defer conn.Close(ctx)

Instead of using sql.Open, we call pgx.Connect instead. When it's time to query the DB, our function for grabbing all the courses a user is signed up for would be:

func dbAllCoursesForUser(ctx context.Context, conn *pgx.Conn, userId int64) ([]course, error) {

rows, err := conn.Query(ctx, `

select courses.id, courses.created_at, courses.title, courses.hashtags

from courses

inner join course_user on courses.id = course_user.course_id

where course_user.user_id = $1`, userId)

if err != nil {

return nil, err

}

defer rows.Close()

var courses []course

for rows.Next() {

var c course

err = rows.Scan(&c.Id, &c.CreatedAt, &c.Title, &c.Hashtags)

if err != nil {

return nil, err

}

courses = append(courses, c)

}

if err := rows.Err(); err != nil {

return nil, err

}

return courses, nil

}

Note that the Go struct types representing table entries remain exactly the same. Reading query results with pgx is very similar to database/sql, but array types no longer need to be wrapped in pq.Array, since pgx supports natively reading PostgreSQL arrays into Go slices.

So, what do we get by using pgx instead of database/sql? According to the feature list on its README, quite a lot, including native support for custom PostgreSQL types, JSON, an advanced connection pool and a whole slew of performance-oriented features. Most notably, pgx uses the PostgreSQL binary protocol directly for faster marshaling and unmarshaling of types. According to pgx's benchmarks, there are considerable performance differences in some cases [2].

sqlx

We've seen a few examples of non-trivial SQL queries being scanned into Go objects so far; all of them involve the same pattern:

- The query is submitted

- The result is iterated row by row

- Each row gets manually unmarshaled into struct fields

One of the biggest complaints about database/sql in Go is the verbosity of this process; particularly the second and third steps above. Why can't we just say:

var courses []course

db.FillInQueryResults(&courses, ....)

After all, many packages in the Go standard library already work this way; for example encoding/json, etc. The reason is the variety of types SQL supports. While JSON has relatively few supported types, SQL has many; moreover, SQL types differ by database. Therefore, it was fairly tricky for the Go project to offer such advanced scanning capabilities in the standard library, and we have to rely on third-party packages instead.

Luckily, an abundance of third-party packages exists just for this purpose. One of the most prominent is sqlx. Let's revisit our sample database querying code, this time using sqlx. The full code for this is available here.

The database setup code is very similar to the vanilla database/sql version:

import (

"fmt"

"log"

"os"

"github.com/jmoiron/sqlx"

_ "github.com/lib/pq"

)

func Check(err error) {

if err != nil {

log.Fatal(err)

}

}

func main() {

db, err := sqlx.Open("postgres", os.Getenv("MOOCDSN"))

Check(err)

defer db.Close()

// ... use db here

}

sqlx.Open wraps sql.Open and uses the same database driver registration mechanism. The type it returns is sqlx.DB, which extends sql.DB with some convenience methods. Here's our function to query all courses a user is signed up for, this time using sqlx:

func dbAllCoursesForUser(db *sqlx.DB, userId int64) ([]course, error) {

var courses []course

err := db.Select(&courses, `

select courses.id, courses.created_at, courses.title, courses.hashtags

from courses

inner join course_user on courses.id = course_user.course_id

where course_user.user_id = $1`, userId)

if err != nil {

return nil, err

}

return courses, nil

}

This is just what we wanted! The code scans the result into a slice of course objects directly, without needing the row-by-row loop. sqlx accomplishes this feat by using reflection - it examines the underlying type of the struct in the slice and maps DB columns to struct fields automatically. It sometimes needs help, though; for example, our course struct has to be modified as follows:

type course struct {

Id int64

CreatedAt time.Time `db:"created_at"`

Title string

Hashtags pq.StringArray

}

Since sqlx won't map the database created_at column to the CreatedAt field automatically, we have to provide an instruction to do so explicitly in a field tag.

sqlx requires an underlying database/sql driver for the actual DB interactions. In the example above, we've been using pq, but the stdlib driver of pgx can be used as well. Unfortunately, sqlx does not support the native pgx driver. However, a different package called scany does support both the native and the stdlib drivers of pgx. I wrote another version of this sample, using scany; I won't show this code here, since it's very similar to the sqlx example, but you can find it on GitHub.

Is sqlx worth it?

Looking at our dbAllCoursesForUser function, the version using sqlx saves about 14 lines of code compared to the vanilla scan with database/sql. I'm on record saying that ORMs are unlikely to be worthwhile in Go, but what about sqlx? Is saving 14 LOC per DB query function worth the trouble of an additional dependency, with its potential quirks, bugs and leaky abstractions?

This question is hard to answer globally, so I'll just say "it depends".

On one hand, 14 LOC per DB query is really not much. Say you have 50 possible SQL queries in your application, this saves 700 LOC of trivial and repetitive code. Is that a lot? In most cases, almost certainly not. In the end, it all boils down to the central thesis of the benefits of extra dependencies as a function of effort.

On the other hand, as opposed to ORMs, packages like sqlx and scany provide a fairly focused utility with not very much magic involved. After all, the standard library already has similar tools built in for unmarshaling JSON, so this is a tried-and-true method that can work for data in relational databases as well. Since the utility of these packages is focused, they are not terribly hard to tear out of a codebase and replace, in case things don't go as expected, so they also present a considerably smaller risk than going all-in on ORMs.

To conclude, packages like sqlx and scany provide a middle ground between raw SQL access and full-blown ORMs; this means mid-of-the-way advantages as well as disadvantages.

| [1] | There's a small nuance to be aware of if you're following along with the code samples, trying to run them. To be able to read PostgreSQL arrays in Go using the database/sql driver component of pgx, we still need to import pq in order to use its pq.Array type. This type provides custom readers that are required to read custom DB types via the standard interface. When using the pgx direct interface, this is not necessary since pgx supports reading PostgreSQL arrays directly into slices. See this pgx issue for additional information. |

| [2] | As usual with benchmarks, YMMV. Every case is different, and I imagine that in many scenarios the network overhead of a PostgreSQL connection will subsume any difference observable between different drivers. |